In November 2016, Google revealed a significant change to the method of crawling websites and began to make their index mobile-first, which means the mobile rendering of a particular website ends up being the starting point for what Google includes in their index. In May 2019, Google upgraded the rendering engine of their spider to be the most current at the time version of Chromium (74 at the time of this posting).

In December 2019, Google began upgrading the User-Agent string of their crawler to show the most recent Chrome version used by their rendering service. The hold-up was to give web designers time to update their code that reacted to specific bot User-Agent strings. Google ran assessments and felt great that the effect would be minimized.

If one desires, a page can be clearly excluded from an online search engine's database by utilizing a meta tag particular to robots (typically). When an online search engine goes to a website, the robots.txt file situated in the root directory is the first file crawled.

This file is then parsed and will advise the robot regarding which pages are, and are not to be crawled. As a search engine spider may keep a cached copy of this file, it may accidentally crawl pages a web designer does not want crawled. Pages typically prevented from being crawled include login pages, shopping carts, and user-specific content such as search results from internal searches.

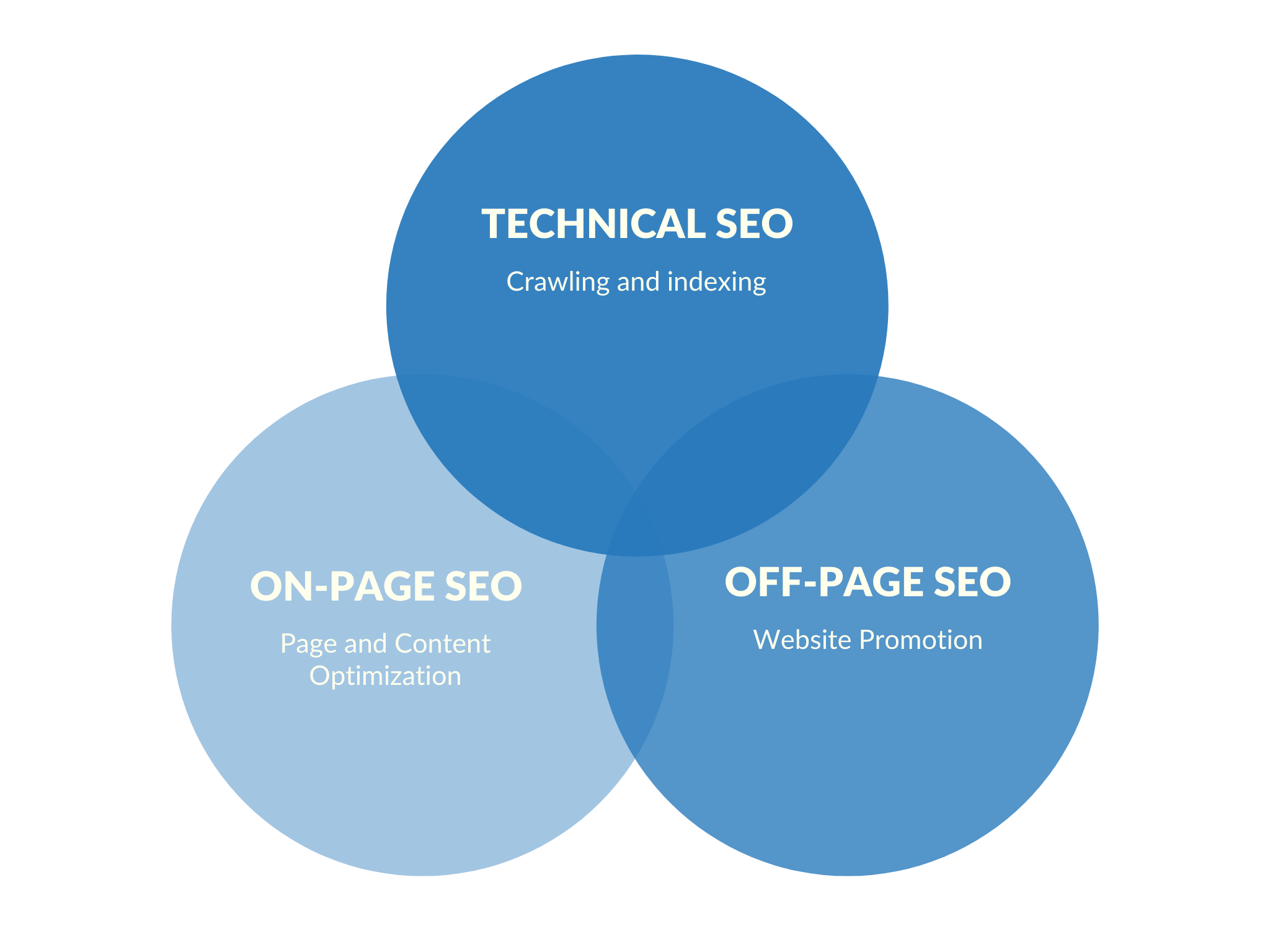

A range of approaches can increase the prominence of a web page within the search results. Cross connecting in between pages of the very same website to offer more links to important pages may improve its exposure. Composing content that consists of often browsed keyword phrase, so as to pertain to a large range of search questions will tend to increase traffic as well.

Adding pertinent keywords to a websites's metadata, consisting of the title tag and meta description, will tend to improve the significance of a site's search listings, hence increasing traffic. URL canonicalization of web pages accessible through several URLs, utilizing the canonical link aspect or by means of 301 redirects can make sure links to different variations of the URL all count towards the page's link popularity rating.

Online search engines try to prevent content bloat and spam from becoming indexed. Industry analysts have actually categorized these techniques, and the specialists who utilize them, as either white hat SEO, or black hat SEO. White hats tend to produce outcomes that last a long time, whereas black hats prepare for that their websites might ultimately be prohibited either momentarily or completely as soon as the search engines find what they are doing.

Online search engine standards are not written as a series of rules or commandments, this is a crucial distinction to keep in mind. Black hat SEOs typically don't follow guidelines but they're more likely to manipulate search engine indexes and subsequently rank content faster than would otherwise be allowed.

White hat SEO remains (in many ways) comparable to advancing content which promotes ease of access over a longer duration. Black hat SEO attempts to improve rankings in manner which can be conceived to be deceptive. One black hat technique utilizes hidden text, either as text colored the same as the page background, or in a hidden div tag, or placed off screen.

Another classification often used is grey hat SEO. This remains in between black hat and white hat methods, where the methods employed prevent the site being punished however do not typically achieve the best results for users. Grey hat SEO is completely concentrated on improving online search engine rankings. Online search engine may punish websites they find using black or grey hat methods, either by decreasing their rankings or removing their listings from their databases entirely.

One example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for use of misleading practices. Both companies, however, quickly apologized, fixed the identified pages, and were brought back to Google's search engine results page. SEO is not an appropriate method for each site, and other Web marketing strategies can be more reliable, such as paid advertising through pay per click (PPC) projects, depending on the site operator's goals.

Its difference from SEO is most simply depicted as the distinction in between paid and unpaid SEO techniques. SEM (search engine marketing) focuses on prominence more so than relevance; site designers ought to consider SEM with the utmost importance.

In November 2015, Google released a complete 160-page variation of its Search Quality Ranking Standards to the public, which revealed a shift in their focus towards "usefulness" and mobile regional search. In the last few years the mobile market has exploded, surpassing the use of desktops.

3% of the pages were owned by a mobile device. Google has actually been one of the companies that are making use of the popularity of mobile usage by motivating sites to use their Google Search Console, the Mobile-Friendly Test, which allows companies to check their website vs. the search engine results and determine how user-friendly their websites are.

However, search engines do NOT make money by offering organic search traffic so their algorithms change and there are no guarantees of ongoing listing results. Due to this absence of assurance and the unpredictability, a service or business that relies heavily on online search engine traffic can suffer significant losses if the search engines stop sending visitors.

According to Google's CEO, Eric Schmidt - in 2010 Google made over 500 algorithm modifications which works out to nearly 1. 5 each day. It is considered to be a good idea for website operators to free themselves of reliance on search engine traffic. Nowadays, ease of use has actually become progressively crucial for SEO.

The online search engine market shares differ from market to market, as does competition. In 2003, Danny Sullivan specified that Google represented about 75% of all searches. In markets outside the United States, Google's share is typically bigger, and Google stays the dominant online search engine worldwide since 2007. As of 2006, Google had an 859% market share in Germany.

Since June 2008, the market share of Google in the UK was close to 90% according to Hitwise. That market share is attained in a variety of countries. As of 2009, there are just a couple of large markets where Google is not the leading search engine. In the majority of cases, when Google is not leading in an offered market, it is dragging a regional player.

Successful search optimization for global markets may need expert translation of web pages, registration of a domain name with a top level domain in the target market, and web hosting that offers a regional IP address. Otherwise, the essential elements of search optimization are essentially the same, no matter the language.

SearchKing's claim was that Google's methods to prevent spam indexing constituted a tortious disturbance with contractual relations. On May 27, 2003, the court granted Google's motion to dismiss the complaint due to the fact that SearchKing "failed to state a claim upon which relief might be given." In March 2006, KinderStart submitted a suit against Google over online search engine rankings.

On March 16, 2007, the United States District Court for the Northern District of California (San Jose Department) dismissed KinderStart's complaint without a motion to amend, and partially approved Google's movement for Rule 11 sanctions against KinderStart's lawyer, requiring him to pay part of Google's legal costs.

So... What is the frustrated online marketer to do? Adapt and Conquer. The quicker you learn the nuances of how to take advantage of Google's algorithms, the sooner you will gain traffic and thus, market share. Let's face it... each passing day, week, month, and year brings even MORE competition into the competitive landscape known as Online Marketing. Put on your big boy/girl pants and SEIZE THE DAY!